The business model of social media makes the algorithmic amplification of messages like hate speech and disinformation a lucrative proposition and a destabilizing force in a democratic society, the International Grand Committee on Disinformation and “Fake News” heard this week. Experts provided evidence on online harm, hate speech and electoral interference, Rappler reports.

Online political campaigning techniques are distorting our democratic political processes, according to Kate Jones, Director of the Diplomatic Studies Program at the University of Oxford. These techniques include:

Online political campaigning techniques are distorting our democratic political processes, according to Kate Jones, Director of the Diplomatic Studies Program at the University of Oxford. These techniques include:

- the creation of disinformation and divisive content;

- exploiting digital platforms’ algorithms, and using bots, cyborgs and fake accounts to distribute this content;

- maximizing influence through harnessing emotional responses such as anger and disgust; and micro-targeting on the basis of collated personal data and sophisticated psychological profiling techniques.

Some state authorities distort political debate by restricting, filtering, shutting down or censoring online networks, she writes in Online Disinformation and Political Discourse: Applying a Human Rights Framework, a new report for Chatham House, the London-based foreign policy think tank:

- Such techniques have outpaced regulatory initiatives and, save in egregious cases such as shutdown of networks, there is no international consensus on how they should be tackled. Digital platforms, driven by their commercial impetus to encourage users to spend as long as possible on them and to attract advertisers, may provide an environment conducive to manipulative techniques.

- International human rights law, with its careful calibrations designed to protect individuals from abuse of power by authority, provides a normative framework that should underpin responses to online disinformation and distortion of political debate. Contrary to popular view, it does not entail that there should be no control of the online environment; rather, controls should balance the interests at stake appropriately.

- The rights to freedom of thought and opinion are critical to delimiting the appropriate boundary between legitimate influence and illegitimate manipulation. …. States and digital platforms should consider structural changes to digital platforms to ensure that methods of online political discourse respect personal agency and prevent the use of sophisticated manipulative techniques.

Freedom House

The report echoes concerns raised in two other recent analyses:

- Digital platforms are the “new battleground” for democracy, and a Trojan horse for tyranny, the 2019 “Freedom on the Net” report from Freedom House warned this week.

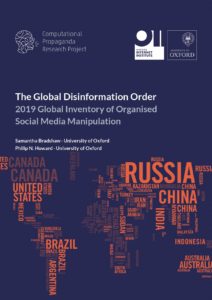

- The Computational Propaganda Project’s “2019 Global Inventory of Organized Social Media Manipulation” (above) found “evidence of organized social media manipulation campaigns in 70 countries, up from 48 countries in 2018.

A business model that makes manipulation profitable is a foundational threat to markets and democracy, said Jim Balsillie of the Centre for International Governance Innovation. Democracy and markets only work when people can make free choices aligned with their interests, yet companies that monetize personal data are incentivized by and profit from undermining personal autonomy, he said.

His six recommendations to the International Grand Committee on Disinformation and “Fake News” draw on Models for Platform Governance, addressing frameworks for governing the world’s most powerful digital platforms.

His six recommendations to the International Grand Committee on Disinformation and “Fake News” draw on Models for Platform Governance, addressing frameworks for governing the world’s most powerful digital platforms.

More shocking is news this week that two former Twitter employees have been indicted by the Department of Justice for allegedly spying on behalf of Saudi Arabia, adds Sean Lawson, an Adjunct Scholar at the Modern War Institute at West Point and Associate Professor at the University of Utah. The Washington Post reported that the two employees are accused of “accessing the company’s information on dissidents who use the platform” and passing that information to the Saudi government, he writes for Forbes.

National Endowment for Democracy board member Eileen Donahoe told a recent forum that preventing disinformation must include media literacy and public awareness, and questioned Michael Kratsios, the Chief Technology Officer of the United States, at the 2019 Fall Conference of the Institute for Human-Centered Artificial Intelligence (HAI).

National Endowment for Democracy board member Eileen Donahoe told a recent forum that preventing disinformation must include media literacy and public awareness, and questioned Michael Kratsios, the Chief Technology Officer of the United States, at the 2019 Fall Conference of the Institute for Human-Centered Artificial Intelligence (HAI).